Finding the Best Portfolio Optimization Technique

A comparative study of modern portfolio construction methods on crypto markets

Introduction

This article provides a rigorous, hands-on walkthrough of portfolio optimization methods used in both research and industry. Rather than focusing on theory alone, we emphasize how these approaches behave in practice; what they optimize, which constraints matter, and the trade-offs they impose.

After briefly introducing the minimal notation, we move directly into the major families of portfolio construction techniques, including mean–variance, risk-based, and hierarchical approaches. The goal is not just to present these methods, but to understand when and why they work (or fail), especially in noisy and high-volatility environments.

Prerequisite (recommended):

This article assumes basic familiarity with Markowitz Mean–Variance Optimization (MVO). For full derivations of the efficient frontier, the tangency portfolio, and a detailed discussion of estimation error and its practical remedies (e.g., shrinkage, factor models, Black–Litterman, and robust optimization), see:

Notation and Setup

Consider n risky assets with (random) single-period returns r ∈ ℝⁿ. A portfolio is represented by weights w ∈ ℝⁿ. In the simplest setting, the portfolio return is

Let the expected returns be

and the covariance matrix be

Then the portfolio’s expected return and variance are

A standard budget constraint is

where the left 1 is the all-ones vector in ℝⁿ. Additional constraints may include long-only constraints w >= 0, leverage limits |w|_1 <= L, box constraints l <= w <= u, factor exposure constraints, and turnover constraints. Note: if w >= 0 and 1^⊤ w = 1, then |w|_1 = 1 is constant; l_1 leverage constraints/penalties matter primarily for long/short portfolios, active weights a = w - w_b, or turnover terms |wt - w_(t-1)|_1.

To set us up let’s first import the necessary libraries and set plot styles:

import os

import glob

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

from scipy import linalg

from scipy import optimize

from scipy.optimize import linprog

from scipy.cluster.hierarchy import linkage, to_tree, dendrogram

from scipy.spatial.distance import squareform

# Plot style defaults.

plt.rcParams.update(

{

“figure.figsize”: (10, 6),

“axes.grid”: True,

“grid.alpha”: 0.3,

“axes.titlesize”: 14,

“axes.labelsize”: 12,

“legend.fontsize”: 10,

}

)We don’t wanna be trading stablecoins, so we’ll exclude them:

STABLE_SYMBOLS = {

“USDT”, “USDC”, “USDS”, “USDE”, “DAI”, “PYUSD”, “USD1”, “XAUT”, “USDF”,

“PAXG”, “USDG”, “RLUSD”, “BFUSD”, “USDD”, “USDTB”, “USD0”, “FDUSD”, “A7A5”,

“GHO”, “TUSD”, “CUSD”, “USDB”, “USR”, “KAU”, “EURC”, “CRVUSD”, “KAG”,

“BUSD”, “USX”, “FRAX”, “USDC.N”, “YLDS”, “USDA”, “AUSD”, “DUSD”, “SATUSD”,

“GUSD”, “EURS”, “DOLA”, “FRXUSD”, “PUSD”, “AIDAUSDC”, “USDZ”, “AVUSD”, “MNEE”,

“USDR”, “PGOLD”, “CASH”, “EURCV”, “SUSDA”, “USDO”, “FEUSD”, “USDX”, “YZUSD”,

“MIM”, “USDKG”, “REUSD”, “USDM”, “USD+”, “USDP”, “YUSD”, “XUSD”, “BOLD”,

“HYUSD”, “LISUSD”, “USTC”, “USDN”, “LUSD”, “FXUSD”, “HBD”, “XTUSD”, “HONEY”,

“EUSD”, “ZCHF”, “MIMATIC”, “USN”, “SUSD”, “EURE”, “BUCK”, “MUSD”, “EURA”,

“GYD”, “USDH”, “MSUSD”, “UTY”, “WEMIX$”, “YU”, “HOLLAR”, “ALUSD”, “XSGD”,

“EURR”,

}Next, some useful helper function:

def _normalize_symbol(symbol: str) -> str:

return “”.join(ch for ch in str(symbol).upper() if ch.isalnum())

def _base_symbol_from_ticker(ticker: str) -> str:

t = str(ticker).upper()

suffix = “_USD_1DAY_COMPOSITE”

if t.endswith(suffix):

return t[:-len(suffix)]

return t.split(”_”)[0]

STABLE_SYMBOLS_NORM = {_normalize_symbol(s) for s in STABLE_SYMBOLS}Whenever data isn’t available for a coin, we’ll be using synthetic data for demonstration purposes:

# Synthetic fallback data.

def synthetic_crypto_like_returns(

n_assets: int = 12,

n_obs: int = 1200,

seed: int = 42,

df_t: float = 5.0,

regime_switch_prob: float = 0.02,

) -> pd.DataFrame:

rng = np.random.default_rng(seed)

dates = pd.date_range(”2020-01-01”, periods=n_obs, freq=”D”)

# Build three correlation clusters.

n_clusters = 3

cluster_sizes = [n_assets // n_clusters] * n_clusters

for i in range(n_assets % n_clusters):

cluster_sizes[i] += 1

clusters = np.concatenate([[k] * sz for k, sz in enumerate(cluster_sizes)])

base_corr = np.eye(n_assets)

for i in range(n_assets):

for j in range(n_assets):

if i == j:

continue

base_corr[i, j] = 0.55 if clusters[i] == clusters[j] else 0.15

# Add symmetric jitter and keep the matrix positive definite.

jitter = rng.normal(scale=0.03, size=(n_assets, n_assets))

jitter = 0.5 * (jitter + jitter.T)

corr = base_corr + jitter

np.fill_diagonal(corr, 1.0)

eig = np.linalg.eigvalsh(corr)

if eig.min() <= 1e-6:

corr += (abs(eig.min()) + 1e-3) * np.eye(n_assets)

# Give each asset a different daily volatility.

base_vol = rng.uniform(0.02, 0.08, size=n_assets)

cov = np.outer(base_vol, base_vol) * corr

L = np.linalg.cholesky(cov)

# Switch between low- and high-volatility regimes.

vol_mult = np.ones(n_obs)

state = 0

for t in range(1, n_obs):

if rng.random() < regime_switch_prob:

state = 1 - state

vol_mult[t] = 1.0 if state == 0 else 2.0

# Student-t shocks via normal / sqrt(chi2/df).

Z = rng.standard_normal(size=(n_obs, n_assets))

chi2 = rng.chisquare(df=df_t, size=n_obs) / df_t

t_innov = Z / np.sqrt(chi2)[:, None]

rets = (t_innov @ L.T) * vol_mult[:, None]

cols = [f”Asset_{i:02d}“ for i in range(n_assets)]

return pd.DataFrame(rets, index=dates, columns=cols)The function for loading actual data (We are using CoinAPI):

# Loader for real data files.

def load_crypto_dataset_from_dir(

data_dir: str = “/Users/admin/Documents/Projects/PortfolioOptimization/data”,

max_assets: int = 100,

min_obs: int = 365,

coverage_threshold: float = 0.98,

selection_metric: str = “mean_volume”, # ‘mean_volume’ or ‘n_obs’

verbose: bool = True,

):

if not os.path.isdir(data_dir):

raise FileNotFoundError(f”Directory not found: {data_dir}“)

csv_files = sorted(glob.glob(os.path.join(data_dir, “*.csv”)))

if len(csv_files) == 0:

raise FileNotFoundError(f”No CSV files found in {data_dir}“)

records = []

price_series = {}

excluded_stables = []

for fp in csv_files:

sym = os.path.splitext(os.path.basename(fp))[0].upper()

base_sym = _base_symbol_from_ticker(sym)

if _normalize_symbol(base_sym) in STABLE_SYMBOLS_NORM:

excluded_stables.append(base_sym)

continue

try:

df = pd.read_csv(fp)

except Exception as e:

if verbose:

print(f”[WARN] Skipping {fp} (read error: {e})”)

continue

if “date” not in df.columns or “close” not in df.columns:

if verbose:

print(f”[WARN] Skipping {fp} (missing required columns ‘date’/’close’)”)

continue

keep_cols = [c for c in df.columns if c in (”date”, “close”, “volume_usd”)]

df = df.loc[:, keep_cols].copy()

df[”date”] = pd.to_datetime(df[”date”], errors=”coerce”)

df[”close”] = pd.to_numeric(df[”close”], errors=”coerce”)

df = df.dropna(subset=[”date”, “close”]).sort_values(”date”)

df = df.drop_duplicates(subset=[”date”], keep=”last”)

if df.shape[0] < min_obs:

continue

s = df.set_index(”date”)[”close”].astype(float)

price_series[sym] = s

mean_volume = float(df[”volume_usd”].astype(float).mean()) if “volume_usd” in df.columns else np.nan

records.append(

{

“symbol”: sym,

“n_obs”: int(df.shape[0]),

“start”: df[”date”].min(),

“end”: df[”date”].max(),

“mean_volume_usd”: mean_volume,

“file”: fp,

}

)

if len(price_series) < 2:

raise ValueError(f”Insufficient usable assets in {data_dir} after filtering (min_obs={min_obs}).”)

info = pd.DataFrame(records).set_index(”symbol”)

# Pick the sorting column.

if selection_metric == “mean_volume” and info[”mean_volume_usd”].notna().any():

sort_by = “mean_volume_usd”

else:

sort_by = “n_obs”

info = info.sort_values(by=sort_by, ascending=False, na_position=”last”)

selected = list(info.index[:max_assets])

prices = pd.concat({sym: price_series[sym] for sym in selected}, axis=1).sort_index()

rets = np.log(prices).diff()

# Filter assets again after return coverage is computed.

coverage = rets.notna().mean()

keep_cols = coverage[coverage >= coverage_threshold].index.tolist()

prices = prices[keep_cols]

rets = rets[keep_cols]

info = info.loc[keep_cols]

before_rows = rets.shape[0]

rets = rets.dropna(how=”any”) # intersection of dates

prices = prices.loc[rets.index]

after_rows = rets.shape[0]

if verbose:

if excluded_stables:

print(f”[DATA] Excluded {len(set(excluded_stables))} stable symbols before selection.”)

print(f”[DATA] Loaded {len(keep_cols)} assets from {data_dir} (sorted by {sort_by}).”)

print(f”[DATA] Date range: {rets.index.min().date()} → {rets.index.max().date()} ({after_rows} daily obs).”)

print(

“[DATA] Missing-data policy: drop any date with any missing return across selected assets “

f”(kept {after_rows}/{before_rows} rows after alignment).”

)

if after_rows < 120:

raise ValueError(”Not enough aligned observations after missing-data handling.”)

return prices, rets, infoThe following function computes returns from price data:

def get_crypto_returns(

data_dir: str = “/Users/admin/Documents/Projects/PortfolioOptimization/data”,

max_assets: int = 100,

min_obs: int = 365,

coverage_threshold: float = 0.98,

seed_fallback: int = 42,

verbose: bool = True,

):

try:

prices, rets, info = load_crypto_dataset_from_dir(

data_dir=data_dir,

max_assets=max_assets,

min_obs=min_obs,

coverage_threshold=coverage_threshold,

verbose=verbose,

)

source = “real”

except Exception as e:

if verbose:

print(f”[FALLBACK] Using synthetic data because real /Users/admin/Documents/Projects/PortfolioOptimization/data load failed: {e}“)

prices = None

rets = synthetic_crypto_like_returns(n_assets=max_assets, n_obs=1200, seed=seed_fallback)

info = pd.DataFrame({”symbol”: rets.columns, “n_obs”: len(rets), “mean_volume_usd”: np.nan}).set_index(”symbol”)

source = “synthetic”

return prices, rets, info, sourceNext, some helpful mathematical helper functions:

# Basic math helpers.

def regularize_cov(S: np.ndarray, eps: float = 1e-8) -> np.ndarray:

“”“Symmetrize and add a small diagonal jitter for numerical stability.”“”

S = np.asarray(S)

S = 0.5 * (S + S.T)

return S + eps * np.eye(S.shape[0])

def annualized_return(log_r: pd.Series, periods_per_year: int = 365) -> float:

“”“Geometric annualized return from log returns.”“”

years = len(log_r) / periods_per_year

return float(np.exp(log_r.sum() / years) - 1.0) if years > 0 else np.nan

def annualized_vol(log_r: pd.Series, periods_per_year: int = 365) -> float:

return float(log_r.std(ddof=1) * np.sqrt(periods_per_year))

def sharpe_ratio(log_r: pd.Series, rf_annual: float = 0.0, periods_per_year: int = 365) -> float:

rf_log = np.log1p(rf_annual) / periods_per_year

ex = log_r - rf_log

vol = ex.std(ddof=1) * np.sqrt(periods_per_year)

mean = ex.mean() * periods_per_year

return float(mean / vol) if vol > 0 else np.nan

def max_drawdown(log_r: pd.Series) -> float:

equity = np.exp(log_r.cumsum())

peak = equity.cummax()

dd = equity / peak - 1.0

return float(dd.min())

def empirical_cvar(log_r: np.ndarray, alpha: float = 0.95) -> float:

“”“

Empirical CVaR of *losses* using simple returns:

loss = - (exp(log_r) - 1)

“”“

simple = np.expm1(log_r)

losses = -simple

k = max(1, int(np.ceil((1 - alpha) * len(losses))))

tail = np.sort(losses)[-k:]

return float(tail.mean())The following data loads our data and does some quick diagnostics:

# Load real data if available, otherwise use synthetic data.

crypto_prices, crypto_returns, asset_info, DATA_SOURCE = get_crypto_returns(

data_dir=”/Users/admin/Documents/Projects/PortfolioOptimization/data”,

max_assets=40,

min_obs=365,

coverage_threshold=0.98,

seed_fallback=42,

verbose=True,

)

print(f”\n[INFO] Data source: {DATA_SOURCE}“)

print(f”[INFO] Returns shape: {crypto_returns.shape[0]} dates × {crypto_returns.shape[1]} assets”)

print(f”[INFO] Assets: {list(crypto_returns.columns)}“)

print(”\n[INFO] Asset info (head):”)

print(asset_info.head())

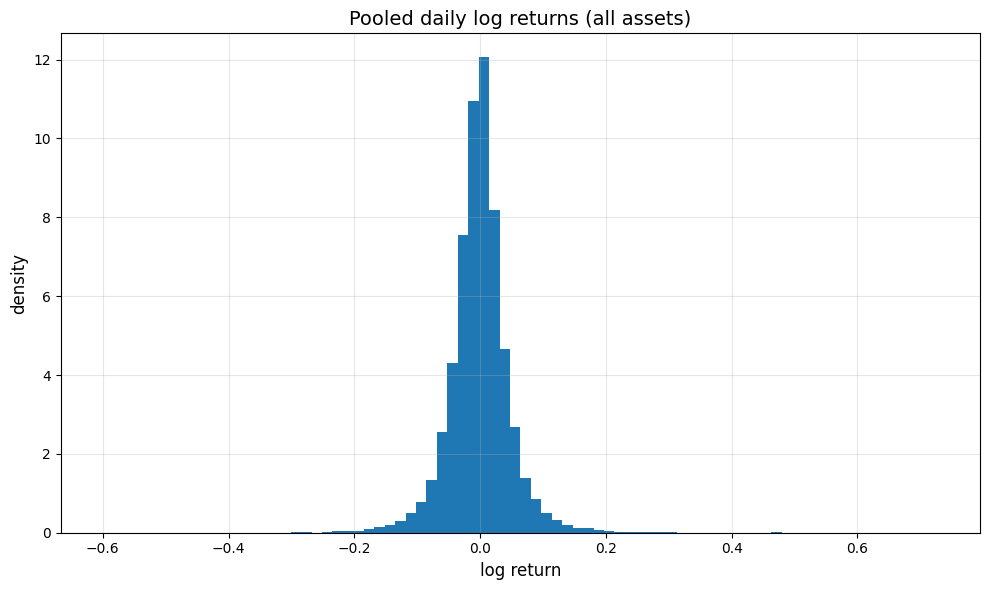

# Quick diagnostics before the experiments.

pooled = crypto_returns.stack()

print(f”\n[DIAGNOSTIC] Pooled daily log-return mean: {pooled.mean():.6f}“)

print(f”[DIAGNOSTIC] Pooled daily log-return std : {pooled.std(ddof=1):.6f}“)

print(f”[DIAGNOSTIC] Pooled excess kurtosis : {pooled.kurt():.2f}“)

# Plot pooled return histogram and correlation heatmap.

fig, ax = plt.subplots()

ax.hist(pooled.values, bins=80, density=True)

ax.set_title(”Pooled daily log returns (all assets)”)

ax.set_xlabel(”log return”)

ax.set_ylabel(”density”)

plt.tight_layout()

plt.show()

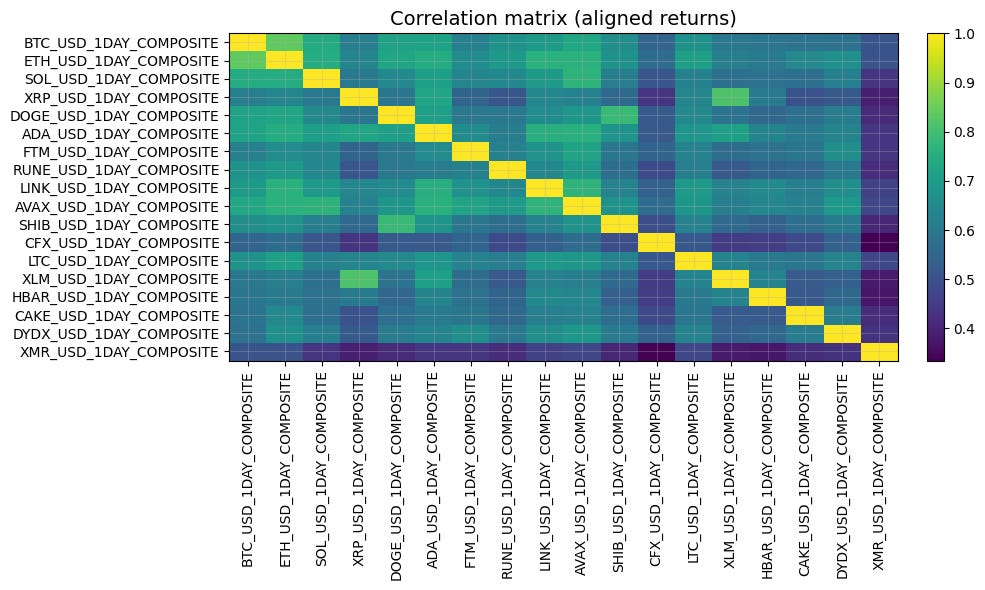

corr = crypto_returns.corr().values

fig, ax = plt.subplots()

im = ax.imshow(corr, aspect=”auto”)

ax.set_title(”Correlation matrix (aligned returns)”)

ax.set_xticks(range(crypto_returns.shape[1]))

ax.set_yticks(range(crypto_returns.shape[1]))

ax.set_xticklabels(crypto_returns.columns, rotation=90)

ax.set_yticklabels(crypto_returns.columns)

plt.colorbar(im, ax=ax, fraction=0.046, pad=0.04)

plt.tight_layout()

plt.show()

[DATA] Excluded 17 stable symbols before selection.

[DATA] Loaded 18 assets from /Users/admin/Documents/Projects/PortfolioOptimization/data (sorted by mean_volume_usd).

[DATA] Date range: 2022-01-04 → 2026-01-16 (1472 daily obs).

[DATA] Missing-data policy: drop any date with any missing return across selected assets (kept 1472/1477 rows after alignment).

[INFO] Data source: real

[INFO] Returns shape: 1472 dates × 18 assets

[INFO] Assets: [’BTC_USD_1DAY_COMPOSITE’, ‘ETH_USD_1DAY_COMPOSITE’, ‘SOL_USD_1DAY_COMPOSITE’, ‘XRP_USD_1DAY_COMPOSITE’, ‘DOGE_USD_1DAY_COMPOSITE’, ‘ADA_USD_1DAY_COMPOSITE’, ‘FTM_USD_1DAY_COMPOSITE’, ‘RUNE_USD_1DAY_COMPOSITE’, ‘LINK_USD_1DAY_COMPOSITE’, ‘AVAX_USD_1DAY_COMPOSITE’, ‘SHIB_USD_1DAY_COMPOSITE’, ‘CFX_USD_1DAY_COMPOSITE’, ‘LTC_USD_1DAY_COMPOSITE’, ‘XLM_USD_1DAY_COMPOSITE’, ‘HBAR_USD_1DAY_COMPOSITE’, ‘CAKE_USD_1DAY_COMPOSITE’, ‘DYDX_USD_1DAY_COMPOSITE’, ‘XMR_USD_1DAY_COMPOSITE’]

[INFO] Asset info (head):

n_obs start end mean_volume_usd \

symbol

BTC_USD_1DAY_COMPOSITE 1477 2022-01-01 2026-01-16 1.168389e+09

ETH_USD_1DAY_COMPOSITE 1477 2022-01-01 2026-01-16 6.636419e+08

SOL_USD_1DAY_COMPOSITE 1477 2022-01-01 2026-01-16 2.891621e+08

XRP_USD_1DAY_COMPOSITE 1477 2022-01-01 2026-01-16 2.603798e+08

DOGE_USD_1DAY_COMPOSITE 1477 2022-01-01 2026-01-16 1.068017e+08

file

symbol

BTC_USD_1DAY_COMPOSITE /Users/admin/Documents/Projects/PortfolioOptim...

ETH_USD_1DAY_COMPOSITE /Users/admin/Documents/Projects/PortfolioOptim...

SOL_USD_1DAY_COMPOSITE /Users/admin/Documents/Projects/PortfolioOptim...

XRP_USD_1DAY_COMPOSITE /Users/admin/Documents/Projects/PortfolioOptim...

DOGE_USD_1DAY_COMPOSITE /Users/admin/Documents/Projects/PortfolioOptim...

[DIAGNOSTIC] Pooled daily log-return mean: -0.000667

[DIAGNOSTIC] Pooled daily log-return std : 0.049747

[DIAGNOSTIC] Pooled excess kurtosis : 13.75

1. Baseline: MVO Recap

We use MVO as the reference point for the rest of this notebook. For full derivations and numerical examples, see the following article:

1.1 Canonical formulations

(MVO-1) Minimum variance at target return:

(MVO-2) Penalized mean–variance utility:

Varying μ_p in (MVO-1) or γ in (MVO-2) traces the (constraint-dependent) efficient frontier: the set of portfolios that are not dominated in (σ, E[r]) space.

1.2 Two anchor portfolios

Global minimum variance (GMV) (unconstrained):

With a risk-free rate r_f and excess returns μ_e = μ - r_f 1, the tangency / maximum Sharpe portfolio (unconstrained) is:

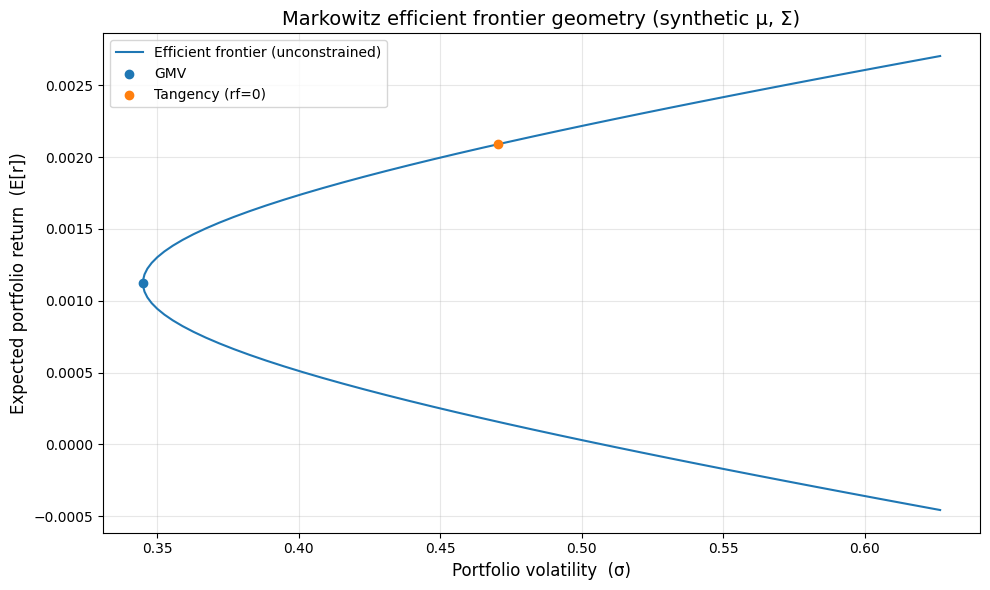

We construct a simple synthetic market with a random covariance matrix and expected returns, allowing us to study the geometry of mean–variance optimization in a controlled setting.

Using closed-form solutions, we compute the global minimum variance (GMV) portfolio and the tangency portfolio (maximum Sharpe ratio). We then sweep across target returns to trace out the efficient frontier, which shows the best achievable return for each level of risk.

# Synthetic efficient frontier example.

rng = np.random.default_rng(0)

n = 6

A = rng.normal(size=(n, n))

Sigma = A @ A.T # SPD covariance

mu = rng.normal(loc=0.0005, scale=0.0010, size=n) # daily expected log returns

ones = np.ones(n)

Sigma = regularize_cov(Sigma, eps=1e-10)

Sigma_inv = linalg.inv(Sigma)

# GMV portfolio.

w_gmv = Sigma_inv @ ones / (ones @ Sigma_inv @ ones)

mu_gmv = float(w_gmv @ mu)

vol_gmv = float(np.sqrt(w_gmv @ Sigma @ w_gmv))

# Tangency portfolio (rf=0).

w_tan_unnorm = Sigma_inv @ mu

w_tan = w_tan_unnorm / (ones @ w_tan_unnorm)

mu_tan = float(w_tan @ mu)

vol_tan = float(np.sqrt(w_tan @ Sigma @ w_tan))

# Closed-form frontier with budget and target-return constraints.

A_ = mu @ Sigma_inv @ mu

B_ = mu @ Sigma_inv @ ones

C_ = ones @ Sigma_inv @ ones

D_ = A_ * C_ - B_**2

# Sweep target returns around GMV.

targets = np.linspace(mu_gmv - 1.5 * np.std(mu), mu_gmv + 1.5 * np.std(mu), 80)

frontier_vol = []

for mu_p in targets:

# Frontier variance at target return mu_p.

var = (C_ * mu_p**2 - 2.0 * B_ * mu_p + A_) / D_

frontier_vol.append(np.sqrt(var))

frontier_vol = np.array(frontier_vol)

# Plot volatility vs expected return.

fig, ax = plt.subplots()

ax.plot(frontier_vol, targets, label=”Efficient frontier (unconstrained)”)

ax.scatter([vol_gmv], [mu_gmv], label=”GMV”, zorder=3)

ax.scatter([vol_tan], [mu_tan], label=”Tangency (rf=0)”, zorder=3)

ax.set_title(”Markowitz efficient frontier geometry (synthetic μ, Σ)”)

ax.set_xlabel(”Portfolio volatility (σ)”)

ax.set_ylabel(”Expected portfolio return (E[r])”)

ax.legend()

plt.tight_layout()

plt.show()

print(”[SYNTHETIC SUMMARY]”)

print(f”GMV: E[r]={mu_gmv:.6f}, σ={vol_gmv:.6f}, sum(w)={w_gmv.sum():.3f}“)

print(f”Tangency E[r]={mu_tan:.6f}, σ={vol_tan:.6f}, sum(w)={w_tan.sum():.3f}“)

print(”\nGMV weights:”, np.round(w_gmv, 4))

print(”Tan weights:”, np.round(w_tan, 4))

[SYNTHETIC SUMMARY]

GMV: E[r]=0.001123, σ=0.344942, sum(w)=1.000

Tangency E[r]=0.002090, σ=0.470481, sum(w)=1.000

GMV weights: [ 0.2473 0.3281 -0.0938 -0.0937 -0.0412 0.6533]

Tan weights: [ 0.1141 0.7469 -0.174 -0.1761 -0.512 1.0012]

1.3 Assumptions (why the baseline is clean, and why it breaks)

MVO is exact under normality or quadratic utility (more generally, elliptical return families) in a single-period setting with known (μ,Σ). In practice, (μ,Σ) are estimated, markets are non-stationary, and constraints/transaction costs are unavoidable. The rest of this notebook is largely about methods that either (i) reduce dependence on fragile estimates, (ii) change the risk objective, or (iii) impose structure to improve out-of-sample stability.

2. How We’ll Compare Portfolio Optimization Methods in Practice

I write about quantitative trading the way it’s actually practiced:

Robust models and portfolios, combining signals and strategies, understanding the assumptions behind your models.

More broadly, I write about:

Statistical and cross-sectional arbitrage

Managing multiple strategies and signals

Risk and capital allocation

Research tooling and methodology

In-depth model assumptions and derivations

If this way of thinking resonates, you’ll probably like what I publish.